Utilizing AI and data analysis to fight cancer.

Telling the difference between benign and cancerous thyroid nodules before surgery is notoriously challenging, but a new study combining AI and data analysis techniques is yielding surprisingly accurate cancer predictions.

‘Structural poverty’ maps could steer help to world’s neediest.

Leveraging national surveys, big-data advances and machine learning, Cornell researchers have piloted a new approach to mapping poverty that could help policymakers identify the neediest people in poor countries and target resources more effectively.

Statistics that tell the whole truth? It’s as easy as ABC

Dan Kowal, M.S. ’15, Ph.D. ’17, associate professor of statistics and data science, has come up with a method he believes can boost statistical power and significantly reduce bias – vital for research involving outcomes that differ by socioeconomics, race, sex and other variables.

Research Excellence

Home to IMS Fellows and NSF CAREER recepients, Statistics and Data Science is widely recognized for research excellence.

Statistics and Data Science Research Areas

Asymptotic statistics studies the properties of statistical estimators, tests, and procedures as the sample size tends to infinity, and finds approximations that can be used in practical applications when the sample size is finite.

Bayesian statistics provides a mathematical data analysis framework for representing uncertainty and incorporating prior knowledge into statistical inference.

The goal of causal inference is to develop a formal statistical framework that answers causal questions from the real world data.

Clinical trials are research studies designed to test the safety and effectiveness of medical treatments, drugs, or medical devices.

Econometrics applies statistical methods to analyze and model economic data. It provides a way to test economic theories and make predictions about economic events.

Providing a rigorous mathematical basis for central limit theorems, large deviation theory, weak convergence, and convergence rates — empirical process theory is widely used in many areas of statistics and has applications in fields such as machine learning, probability theory, and mathematical finance.

Functional data analysis is a branch of statistics that analyzes data providing information about curves, surfaces, or anything else varying over a continuum.

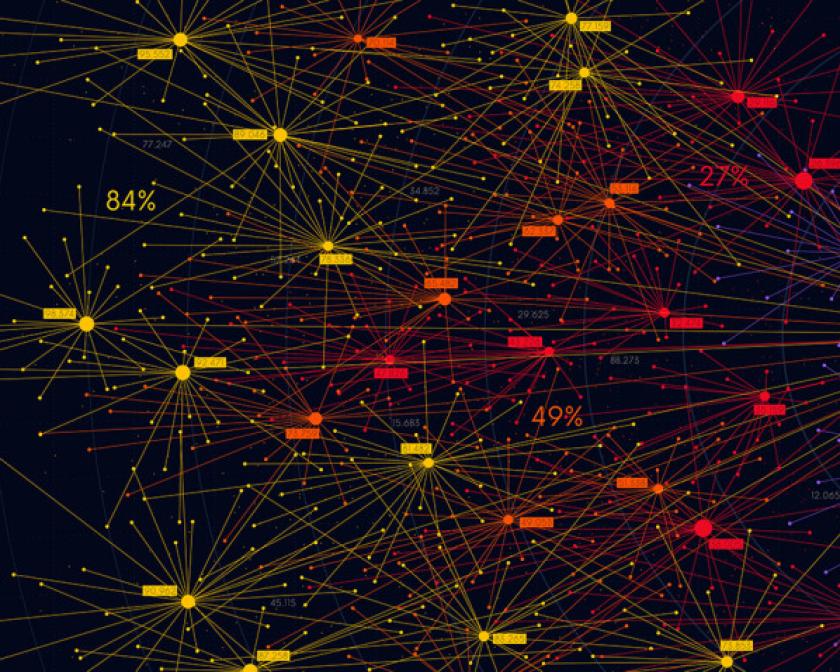

A graphical model, or probabilistic graphical model, is a statistical model which can be represented by a graph. The vertices correspond to random variables, and edges between vertices indicate a conditional dependence relationship.

In statistical theory, the field of high-dimensional statistics studies data whose dimension is larger than typically considered in classical multivariate analysis.

A field of inquiry devoted to understanding and building methods that “learn”— that is, methods that leverage data to improve performance on some set of tasks.

Model selection is the task of selecting a statistical model from a set of candidate models with data. Instances include tuning parameter selection, feature selection in regression, and classification, pattern recovery, and nonparametric estimation.

The study of models, methods, and computational techniques for spatially-referenced data, with the goal of making predictions of and drawing inferences about spatial processes.

Using techniques from statistics, computer science and bioinformatics, statistical geneticists help gain insight into the genetic basis of phenotypes and diseases.

A stochastic process is a family of random variables, where each member is associated with an index from an index set. The type of variable is general, but common specifications are scalar, vector, matrix, or function valued random variables.

The study of its high dimensional aspects is born from the need to address modern challenges in Statistical Optimal Transport, and involves developing new notions of optimal transport that avoid the curse of dimensionality and the computational burden associated with classical approaches.

The Cornell Statistical Consulting Unit

The Cornell Statistical Consulting Unit is a research support unit whose mission is to support Cornell faculty, staff, and students with study design, data analysis, and the use of statistics in their research.

The Latest in News

- Around the College

- Around the College

- Research + Innovation

- Around the College